xFire: AI Agents Debate Your Code So You Don't Ship Vulnerabilities and Bugs

Security review is too important for a single opinion and too noisy to tolerate false positives. xFire uses multi-agent adversarial debate to fix both.

Security review is too important for a single opinion and too noisy to tolerate false positives. Three independent agents review code blindly, then cross-examine findings through a prosecution-defense-judge model.

The Problem: False Positives Drowning Real Issues

Existing static analysis tools cannot distinguish between vulnerabilities and intended features. A deployment script using subprocess.call() gets flagged as command injection. A cryptocurrency wallet handling private keys triggers false alerts. Developers receive reports with twelve false positives for every real finding, causing them to ignore the entire output.

Architecture: Seven Processing Stages

- Context Building — Collects diffs, metadata, related files, git history, configs, and dependencies

- Intent Inference — Establishes what the codebase is designed to do before review begins

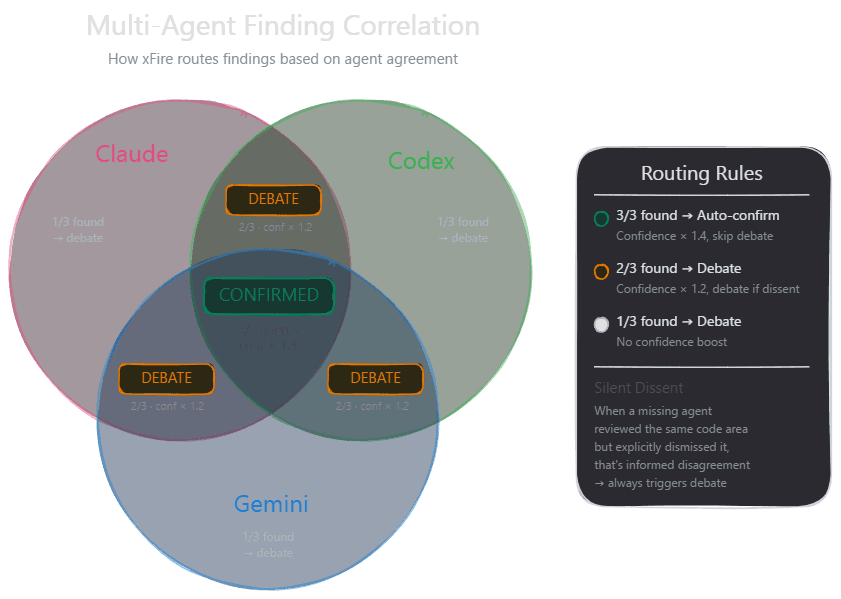

- Independent Blind Review — Claude, Codex, and Gemini analyze code separately

- Finding Extraction — Normalizes outputs into structured findings with severity and CWE tags

- Synthesis & Debate Routing — Clusters similar findings; cross-validated ones gain confidence

- Adversarial Debate — Disputed findings enter prosecution-defense-judge proceedings

- Verdict & Report — Judge's ruling feeds consensus algorithm; final report generated

Intent Inference: Teaching Context

Before agents review anything, the system builds a purpose profile using:

- Heuristic layer (ten signal passes): dependency mapping (40+ mappings), directory structure patterns (15 patterns), security control detection (12 regex patterns), PR intent classification

- LLM enrichment layer (optional): Claude Sonnet validates heuristics and extends capability recognition

This prevents flagging intended capabilities. A coding agent that reads/writes files and executes shell commands should be flagged only for leaking API keys or accepting untrusted instructions — not for its core functionality.

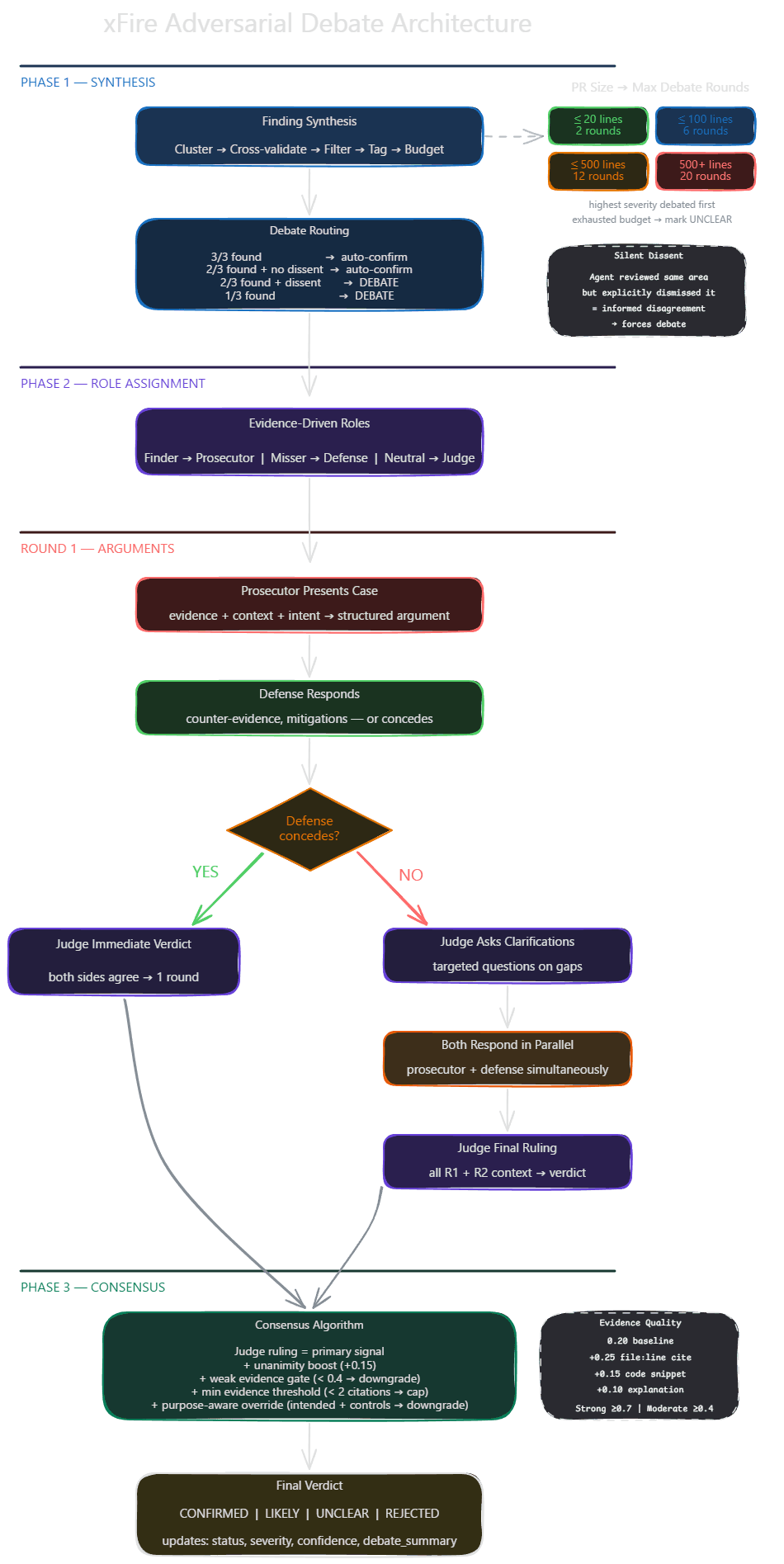

Debate Engine Structure

Role Assignment: Prosecutor (original finder), Defense (dissenter or missing agent), Judge (neutral third party)

Debate Flow:

- Round 1: Prosecution presents evidence; defense counters

- Defense concession check: if both agree, debate ends immediately

- Round 2 (if disputed): Judge asks clarifying questions; both sides respond in parallel

- Judge issues final ruling with position, confidence, and cited evidence

Silent Dissent Detection

When two agents find an issue and one doesn't, the system checks whether the third agent explicitly rejected it (informed dissent) versus simply never analyzing those files (ignorance). Informed dissent signals the finding needs debate.

Beyond Vulnerabilities: Dangerous Production Bugs

xFire hunts 16 categories of dangerous bugs traditional scanners miss:

- Race conditions corrupting shared state

- Destructive operations without safeguards

- Resource exhaustion paths

- Partial state updates leaving data inconsistent

- Broken error recovery swallowing exceptions

- Connection leaks under error paths

Why Three Agents Beat One

- Blind spots cancel out — Different training data and architectures mean each model catches what others miss

- Independent review prevents anchoring — Parallel isolation ensures genuine analysis, not herding

- Adversarial debate forces evidence — Prosecution must cite specific files/lines; defense must produce counter-evidence; judges evaluate evidence quality

- Cross-validation provides signal — Multiple agents identifying the same issue indicates real findings

xFire is open source on PyPI: github.com/Har1sh-k/xfire